OpenAI's Dilemma

WSJ yesterday had an interesting reporting on why OpenAI is pondering to focus more on enterprise. Some excerpts from the piece:

“OpenAI’s top executives are finalizing plans for a major strategy shift to refocus the company around coding and business users, recognizing that a “do everything all at once” strategy has put them on the defensive.

“We cannot miss this moment because we are distracted by side quests,” Simo told staff last week, according to remarks reviewed by The Wall Street Journal. “We really have to nail productivity in general and particularly productivity on the business front.”

Computing resources often shifted from one team to another at the last minute, and the company’s organizational structure grew complicated, the employees said. For example, OpenAI’s Sora team was housed under the research division, even though it was responsible for launching one of the company’s most high-profile products, they said.”

In a compute constrained environment, it can be more expensive than usual to allocate compute on bets with high degree of uncertainty around monetization, especially for an AI labs that requires enormous funding from investors to pursue their ambitions. What made it even more complicated is if a competing AI lab can consistently allocate compute in products/services that drive materially higher revenue and margin than OpenAI can. Right now, AI monetization appears to be much more clear and immediate in enterprise and productivity setting. The rise of agents can make this distinction between enterprise and consumer in monetization even more clear. Consumer market can move in a glacial fashion due to hardcoded habits whereas enterprise customers can switch or transform their workflow much faster the moment ROI makes compelling sense. If Anthropic ends up dominating that market, it is conceivable to imagine that OpenAI’s ability to monetize their compute allocation can increasingly pale in comparison with Anthropic which can directly affect OpenAI’s fundraising ambition and maintain a valuation gap with Anthropic if Anthropic surpasses them in revenue. So, it makes sense to me why OpenAI considers it rather urgent to not cede the enterprise opportunity to Anthropic.

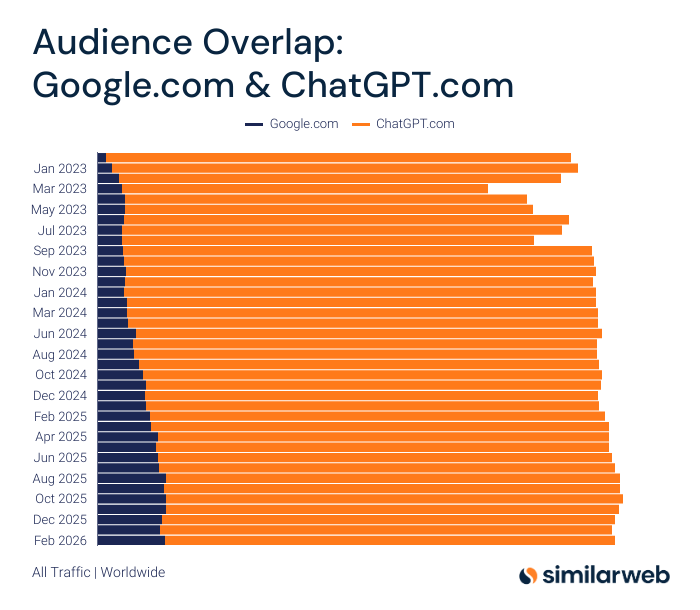

One “advantage” that Anthropic has is they don’t have the “baggage” of almost one billion free weekly active users. I’m saying “baggage” with my tongue in cheek because anyone would kill to have such baggage. The problem is in a compute constrained environment for a deeply FCF negative company, it can be super expensive to host one billion free users. Of course, OpenAI cannot be too generous for too long here and hence, they’re launching ads. I think a big moment of truth for OpenAI will be in the next 6-12 months is how well they are able to monetize their free users. If the monetization is too poor or users react quite negatively to ads on ChatGPT, I wonder how aggressive OpenAI will remain for gaining the incremental user. It already doesn’t help that once incumbents such as Google integrated their models both in Gemini and traditional search, the impetus for the incremental free user to go to ChatGPT is already showing signs of exhaustion. For example, while the percentage of Google visitors who also visited ChatGPT kept growing for much of the 2023-24 period, this number has been largely flat since August 2025. As per Similarweb, the percentage of Google visitors who also visited ChatGPT was 14.3% in August 2025. Their most recent data in February 2025 was 14.1%.

You can argue that OpenAI can serve the free users with a materially cheaper model and keep the most advanced models for paying subscribers. They’re currently using GPT-5.2 for free users whereas GPT 5.4 is only available for paying subscribers. Could they use even older model to serve free users or can they keep using GPT 5.2 for years to serve free users? I have no doubt that deploying GPT 5.2 in 2028 will be significantly cheaper for OpenAI, and they would love to do exactly that if they didn’t have competition. The challenge is Google (and perhaps Meta in the future) may use better models than GPT 5.2 to power their AI experience for free users. If the free users can get better experience in Google or Meta, OpenAI may have much harder time in retaining and particularly attracting incremental free users in this scenario. Perhaps OpenAI is okay with that outcome as long as they are able to deploy their compute in areas with much better visibility to monetization than powering free ChatGPT users.

Of course, if ChatGPT stops trying tooth and nail to grow their free userbase, that can be a huge boon for Alphabet and Meta. But you may wonder if OpenAI finds consumer AI is not as worthwhile as enterprise AI, does it matter if Meta and Alphabet gain material share of the incremental users going forward? Unlike OpenAI, Alphabet (or Meta) doesn’t need to see immediate visibility to revenue as long as they believe there is a lot of money to be made in consumer AI. Indeed, I do think consumer AI can prove to be lot easier to scale for incumbent companies with existing distribution to billions of users than companies such as OpenAI and Anthropic who must spend incremental S&M dollars as well as constrained compute to stay in this space in the long term.

While OpenAI faced bit of a backlash online for introducing ads on ChatGPT, Meta is quite blatant that they will use your interactions with Meta AI to power ads that you will see elsewhere on their platform. From Meta’s own blog post:

“if you chat with Meta AI about hiking, we may learn that you’re interested in hiking — just as we would if you posted a reel about hiking or liked a hiking-related Page. As a result, you might start seeing recommendations for hiking groups, posts from friends about trails, or ads for hiking boots.”

Unlike OpenAI though, Meta won’t show you ads directly on “Meta AI” chat which may put users off wondering if the chat bot’s answer itself is influenced by the advertiser. The user will see such ads, just as they would normally, while scrolling any of the Meta’s apps. The size of the opportunity is quite easy to grasp and it’s not small. Let’s imagine Meta is able to get 1 billion DAU on “Meta AI” in three years (~25% of their Daily Active People on their properties). Let’s say the average DAU on Meta AI asks 360 queries per year. If 20% of these queries have commercial intent, that’s 72 high-intent queries for which Meta can show ads to users later. If ~10% of these high-intent ads convert and each conversion is worth $10-20, that’s ~$70-140 Billion incremental revenue opportunity for Meta. Remember, an average Meta DAU likely sees more than 30k ads on Meta per year. Even if there is some “cannibalization” here (perhaps Meta would have been able to show some of these ads without the help from “Meta AI” anyway), you can still sense that the incremental opportunity can become quite large for incumbent companies with existing userbase and SOTA advertising infrastructure. I did ask you to imagine because Meta doesn’t have a good model yet, but this is the bet they’re likely making. As their models get better over time and for plain vanilla consumer AI queries model quality becomes lot less important than something like coding, Meta thinks they can gain strong adoption with Meta AI.

For Alphabet, you won’t have to imagine much because they already have a pretty good model for consumer AI queries and unlike OpenAI, they won’t be in dilemma to serve free users with better model because they do have a clear visibility towards monetization. From Alphabet’s 4Q’25 earnings call:

“We've been deploying Gemini models to improve query understanding at a rate of almost a launch per month for the last 2 years. These improvements drive better query matching, ranking and quality, making search ads even more effective. With Gemini across our ads quality stack, we evaluate relevance with greater accuracy than with previous generations of models. This has significantly improved our ability to systematically deliver more helpful high-quality ads, contributing to a meaningful reduction in irrelevant ads served. Gemini's understanding of intent has increased our ability to deliver ads on longer, more complex searches that were previously challenging to monetize. Gemini models also have a significant impact on query understanding in non-English languages, expanding opportunities for businesses to scale globally”

While reading the WSJ piece on OpenAI’s potential pivot, I was reminded Brian Chesky’s point in an interview that how he noticed so few companies in Y-Combinator to pursue consumer market in AI and most are just focused on enterprise. While there is certainly a lot of truth to the fact that enterprises may have far greater willingness to pay directly for AI than consumers ever will, it can mask an important truth: consumer AI is simply too hard for startups, and incumbents may be destined to dominate consumer AI in the long term.

In addition to “Daily Dose” (yes, DAILY) like this, MBI Deep Dives publishes one Deep Dive on a publicly listed company every month. You can find all the 66 Deep Dives here.

Current Portfolio:

Please note that these are NOT my recommendation to buy/sell these securities, but just disclosure from my end so that you can assess potential biases that I may have because of my own personal portfolio holdings. Always consider my write-up my personal investing journal and never forget my objectives, risk tolerance, and constraints may have no resemblance to yours.

My current portfolio is disclosed below: