Anthropic's focused bet, Portfolio Change

A programming note: As a reminder, I will take the next two days off for Thanksgiving, and hope to be back on Saturday. Happy Thanksgiving, everyone!

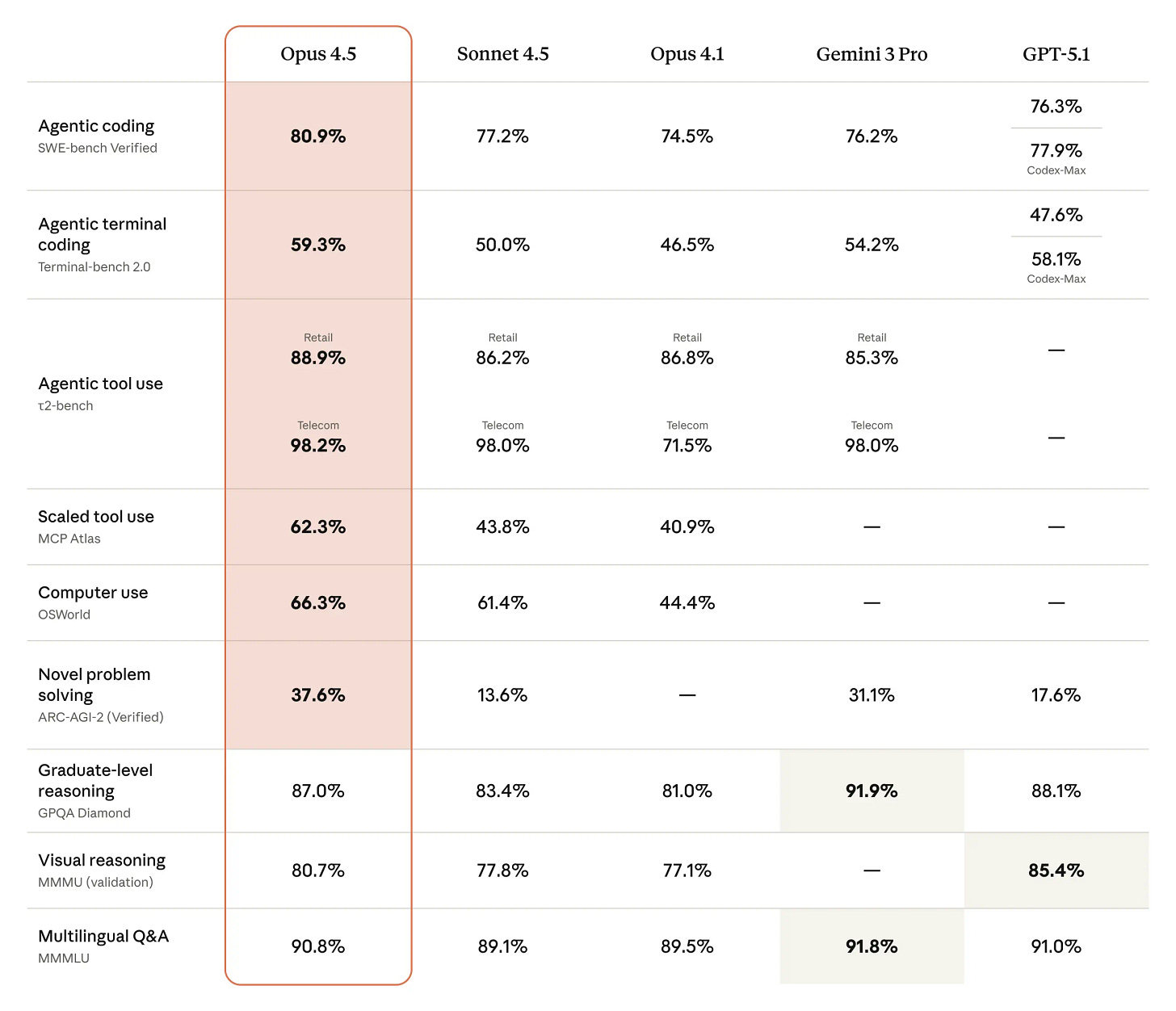

For a brief moment, Gemini was leading the benchmarks until Anthropic released Opus 4.5 this week. For the uninitiated, Sonnet and Opus are two different models within Anthropic’s family of AI systems, each optimized for different use cases. Sonnet is the practical, efficient workhorse designed for speed and scale at a lower cost, while Opus is the premium reasoning engine for mission-critical tasks demanding the highest level of intelligence and accuracy. Opus 4.5 outshines everyone else, including Gemini 3 Pro in several areas, as shown below.

Speaking of Gemini 3.0, let me take this opportunity to share my experience of using the model as well. While benchmarks are important to gauge standardized evaluation, our own personal experience can differ from such benchmark. That is indeed the case for me personally in the case of Gemini 3.0 although I’m not willing to make any big claim since I’m not sure how generalizable my own experience is.

Let me show you one example to make my point. I asked this prompt to both the models: “what are the top 5 hotel chains in the US? what are their aggregate market share in the US?” Click ChatGPT and Gemini to see both of their answers.

Not only ChatGPT’s answer appears to be more on the money here, it gives me ample links and references that I can click to check, ponder, and research the quality of its answers. Gemini didn’t bother to include any clickable links. This isn’t really one-off experience for me either. The question that I wondered this week is would I be able to tell that Alphabet launched a new Gemini model recently. The answer for me is no when it comes to text based reasoning. However, the answer is yes if I think about just photos and video generation in Gemini 3.0. Given that context, I intend to stay subscriber of both ChatGPT and Gemini.

While we are talking about benchmarks, Ilya Sutskever also wondered yesterday that there does seem to be a disconnect between what the evals show and the real world impact of these models:

This is one of the very confusing things about the models right now. How to reconcile the fact that they are doing so well on evals? You look at the evals and you go, “Those are pretty hard evals.” They are doing so well. But the economic impact seems to be dramatically behind.

One thing you could do, and I think this is something that is done inadvertently, is that people take inspiration from the evals. You say, “Hey, I would love our model to do really well when we release it. I want the evals to look great. What would be RL training that could help on this task?” I think that is something that happens, and it could explain a lot of what’s going on.

If you combine this with generalization of the models actually being inadequate, that has the potential to explain a lot of what we are seeing, this disconnect between eval performance and actual real-world performance

Perhaps many of these AI companies are falling for Goodhart’s law: “When a measure becomes a target, it ceases to be a good measure”.

That’s why it may be increasingly more relevant to observe what real world users are saying instead of focusing too much on evals. On that point, I have noticed several people, including Ben Thompson echoing my concerns about Gemini 3.0 although there is indeed a broad consensus around Gemini’s superior image generation capability.

Given this context, I thought there was a particularly interesting blog post from Cognition a couple of months ago. Cognition’s primary product is “Devin” which is an “AI software engineer” capable of independently handling entire software development projects. Some excerpts from the blog post I am talking about:

We rebuilt Devin for Claude Sonnet 4.5.

Why rebuild instead of just dropping the new Sonnet in place and calling it a day? Because this model works differently—in ways that broke our assumptions about how agents should be architected.

Because Devin is an agent that plans, executes, and iterates rather than just autocompleting code (or acting as a copilot), we get an unusual window into model capabilities. Each improvement compounds across our feedback loops, giving us a perspective on what’s genuinely changed. With Sonnet 4.5, we’re seeing the biggest leap since Sonnet 3.6 (the model that was used with Devin’s GA): planning performance is up 18%, end-to-end eval scores up 12%, and multi-hour sessions are dramatically faster and more reliable.

In order to get these improvements, we had to rework Devin not just around some of the model’s new capabilities, but also a few new behaviors we never noticed in previous generations of models.

It is likely much higher signal about model quality when your customer is writing such a blog post than any benchmark out there. Remember, this was Sonnet 4.5; so the recently released Opus 4.5 is expected to be even better.

Anthropic is currently “valued” $350 Billion in private market. Even in early 2024, they were “valued” $18 Billion. So, the company basically became 20-bagger in less than two years! One of the key differences between OpenAI and Anthropic is while OpenAI’s canvass appears to be very, very open ended and they cannot say “no” to almost any opportunity, Anthropic is almost in the opposite extreme. Anthropic is essentially a very focused bet on coding!

Sholto Douglas from Anthropic mentioned why Anthropic made such a choice:

Anthropic has been laser‑focused on coding, computer use, and things we think will have direct economic impact within the next six months.

One thing Anthropic has noticeably not focused on compared to DeepMind and OpenAI is mathematical reasoning. DeepMind and OpenAI have been pursuing mathematical reasoning because of implications for science and because many people there love math and want to see it progress.

We’ve had to reluctantly sacrifice focus on that to focus on near‑term economic impact with models.

Later in the podcast, he elaborated more why coding is the key bet for them. Some excerpts from the podcast (slightly edited for clarity):

Two reasons.

First, we think it’s the thing that will allow us to assist ourselves in AI research faster. There’s this notion of automating AI research. The speed of takeoff—the speed of progress—is driven by how much AI can assist AI research. Pre-fetching this is important.

Second, we think coding is the nearest‑term tractable problem domain in terms of economic impact. For Anthropic to be a viable research program that can work on the things we think are important, we need economic return. Coding is a huge market full of keen early adopters who are excited to try and switch tools.

There’s massive demand. There is dramatically more demand for software than there is good software. We’ve seen this in previous generations of compilers, web abstractions, etc.—demand for software keeps growing.

Models are better at coding earlier than almost anything else because coding is uniquely tractable: the data exists, you can containerize and run things in parallel, you can run unit tests and know when something works.

Self‑driving is uniquely hard because the car needs to work the first time. Coding is different: the model can fail a hundred times; as long as it succeeds once, it’s fine. There’s tractability and replayability that don’t exist when you directly touch the real world…You wouldn’t want an AI lawyer arguing your case in court right now

So far, the bet is clearly working!

In addition to “Daily Dose” (yes, DAILY) like this, MBI Deep Dives publishes one Deep Dive on a publicly listed company every month. You can find all the 65 Deep Dives here.

Current Portfolio:

Please note that these are NOT my recommendation to buy/sell these securities, but just disclosure from my end so that you can assess potential biases that I may have because of my own personal portfolio holdings. Always consider my write-up my personal investing journal and never forget my objectives, risk tolerance, and constraints may have no resemblance to yours.

I have made a couple of changes yesterday which I will discuss behind the paywall.