Meta's Agentic AI Ambitions

When Meta started building their Superintelligence team (MSL) in mid-2025, I certainly expected to see faster progress than what we have seen from them so far. I wonder if the Llama 4 fiasco as well as the backlash for “Vibes” last year made Meta much more tentative than usual for releasing new products/features. This is making Meta increasingly look like a laggard in the AI race despite spending commensurate capital on compute and talent compared to other players leading the race.

Despite looking like a laggard, Meta also seems to be on a tuck-in acquisition spree every other week these days. Just in March, Meta acquired Moltbook, a social media platform for AI agents, and made a non-exclusive license deal with Dreamer, the startup that helps consumers build AI agents. Since the Dreamer co-founders joined MSL as part of the deal, this is essentially yet another roundabout “acquisition” Silicon Valley invented in the current anti-trust environment. The fact that Dreamer raised $56 million at $500 million valuation back in December 2024 and Dreamer’s CEO was a former CTO of Stripe make me think Meta may have paid a good premium to get the Dreamer team in MSL. Just last week, Dreamer’s now former CEO demoed the product in this podcast, and it indeed looks quite promising. In fact, while reacting to the acquisition news, Alex Heath mentioned the following in his newsletter:

I was recently given a demo of the product by co-founder (and former Stripe CTO) David Singleton. I was so intrigued that I demanded to be added to the beta. I clearly wasn’t the only one who was impressed.

With Manus and Dreamer deals, it appears Meta is getting more serious on agent infrastructure above the model layer. In fact, even if Meta remains a laggard in building a SOTA model for the foreseeable future, their core business continues to offer compelling opportunities to integrate AI on top of the model layer. Even though Meta may seem largely absent in the current agentic AI fever, a blog post (h/t Eric Seufert) from Meta last week made me appreciate how Meta is already using AI agents in their advertising infrastructure. Some key excerpts from Meta’s blog post (emphasis mine):

Optimizing these ML models has traditionally been time-consuming. Engineers craft hypotheses, design experiments, launch training runs, debug failures across complex codebases, analyze results and iterate. Each full cycle can span days to weeks. As Meta’s models have matured over the years, finding meaningful improvements has become increasingly challenging. The manual, sequential nature of traditional ML experimentation has become a bottleneck to innovation.

To address this, Meta built the Ranking Engineer Agent (REA), an autonomous AI agent designed to drive the end-to-end ML lifecycle and iteratively evolve Meta’s ads ranking models at scale.

REA addresses three core challenges in autonomous ML experimentation:

Long-Horizon, Asynchronous Workflow Autonomy: ML training jobs run for hours or days, far beyond what any session-bound assistant can manage. REA maintains persistent state and memory across multiround workflows spanning days or weeks, staying coordinated without continuous human supervision.

High-Quality, Diverse Hypothesis Generation: Experiment quality is only as good as the hypothesis that drives it. REA synthesizes outcomes from historical experiments and frontier ML research to surface configurations unlikely to emerge from any single approach, and it improves with every iteration.

Resilient Operation Within Real-World Constraints: Infrastructure failures, unexpected errors and compute budgets can’t halt an autonomous agent. REA adapts within predefined guardrails, keeping workflows moving without escalating routine failures to humans.

In the first production validation across a set of six models, REA-driven iterations doubled average model accuracy over baseline approaches. This translates directly to stronger advertiser outcomes and better experiences on Meta platforms.

REA amplifies impact by automating the mechanics of ML experimentation, enabling engineers to focus on creative problem-solving and strategic thinking. Complex architectural improvements that previously required multiple engineers over several weeks can now be completed by smaller teams in days.

Early adopters using REA increased their model-improvement proposals from one to five in the same time frame. Work that once took two engineers per model now takes three engineers across eight models.

One of the interesting bits from the blog post is that Meta mentioned for long-horizon workflow autonomy, Meta built REA on an internal AI agent framework called “Confucius” which they elaborated further on this paper back in February 2026. Often, when tech companies try to improve AI coders, they focus on making the underlying AI models (like GPT or Claude) smarter. However, the paper argued that the “scaffolding” i.e. the software environment, memory systems, and tools built around the AI is just as important. When working on big codebases, AI agents frequently get overwhelmed by reading too much code, forget their original plan during long tasks, or repeat the same mistakes.

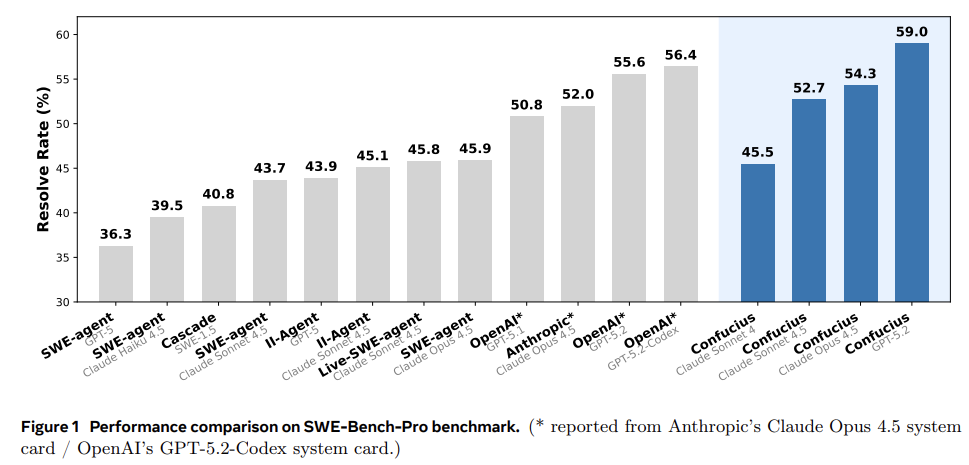

The most interesting takeaway from the paper is that a great setup can compensate for a less powerful AI. The researchers proved that a weaker model (Claude 4.5 Sonnet) using the Confucius scaffolding successfully fixed more bugs (52.7%) than a stronger, more expensive model (Claude 4.5 Opus) using Anthropic’s standard setup (52.0%). When powered by the GPT-5.2 model, Confucius Code Agent successfully resolved 59% of the real-world bugs on the SWE-Bench-Pro test, beating both prior academic research and the official corporate systems built by OpenAI and Anthropic under identical conditions. If such scaffolding itself can consistently beat the more expensive SOTA models, it can provide a ceiling on SOTA model developers’ ability to exercise pricing power. It remains to be seen whether such scaffolding can outperform more expensive SOTA models in a wide range of scenarios. Nonetheless, the key takeaway is quite encouraging for all the tech companies that will not have a SOTA model and those tech companies may still be able to capture value from better scaffolding.

Back in 2Q’25 call, Zuckerberg mentioned the following:

Over the last few months, we’ve begun to see glimpses of our AI systems improving themselves. And the improvement is slow for now, but undeniable and developing superintelligence

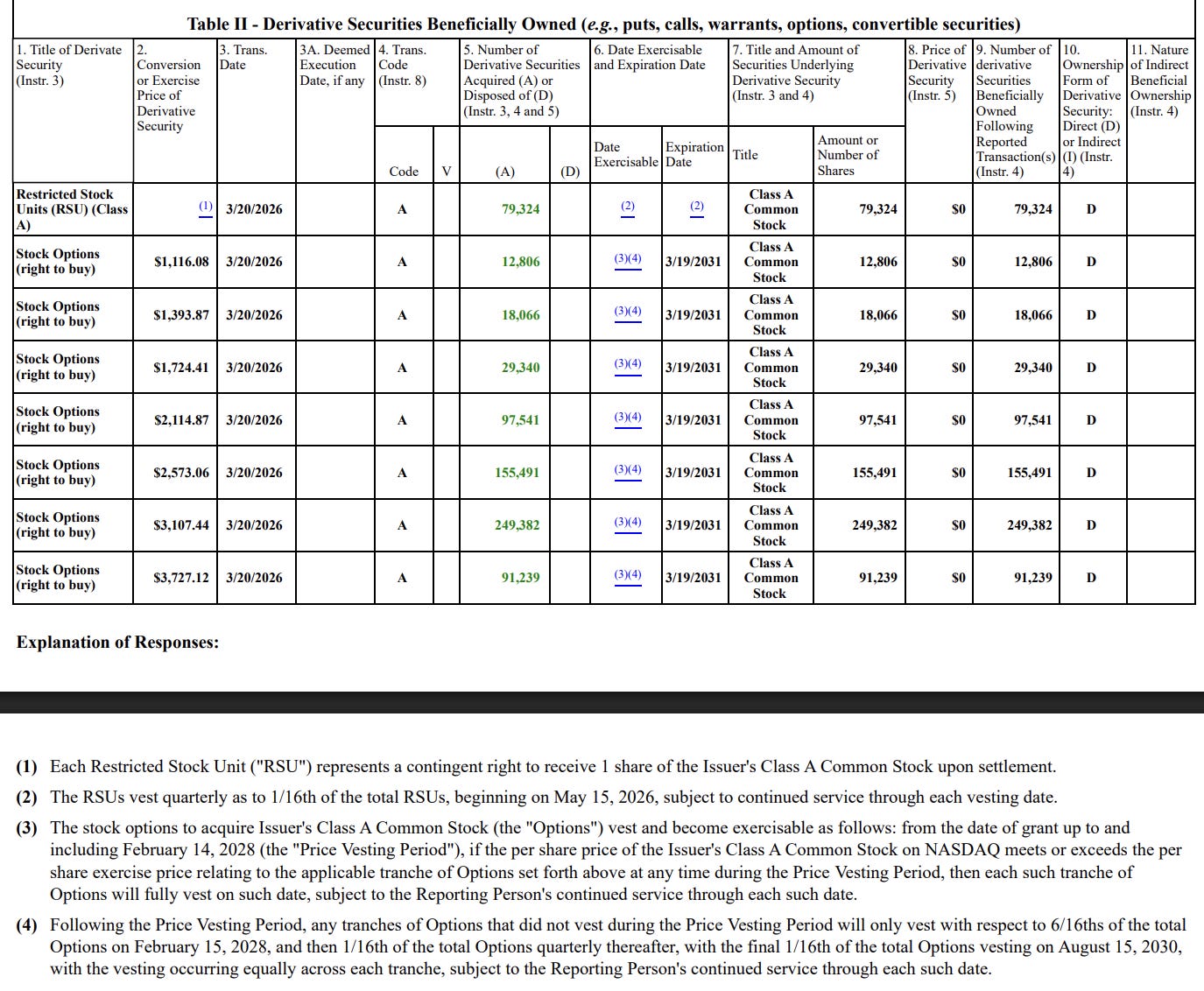

The paper and the blog post essentially validated Zuckerberg’s claims made a couple of quarters ago. The autonomous and self-recursive nature of REA is yet another indication that Meta has a pretty good shot at monetizing their capex even if they remain a laggard in building SOTA model. Perhaps to hint at their confidence, Meta yesterday disclosed a new stock options program for their Chief Technology Officer Andrew Bosworth, Chief Product Officer Chris Cox, Chief Operation Officer Javier Olivan, Chief Financial Officer Susan Li, Chief Legal Officer C.J. Mahoney and Vice Chairman Dina Powell McCormick.

While Meta historically largely doled out RSUs, this is a welcome change from shareholders perspective as the options would expire worthless if the stock price doesn’t exceed the hurdles. To receive even the first tranche of the options, Meta stock needs to increase by 86% from current price, and to receive the full options package, the stock needs to be 6x from current price within the next five years! For your reference, I am showing the stock options program for CTO below (although the numbers vary for each executive, the structure is quite similar for other executives)

As a minority shareholder of Meta, I also very much appreciate that Zuckerberg excluded himself from this options program as he probably doesn’t need any more incentive to see Meta thrive.

In addition to “Daily Dose” (yes, DAILY) like this, MBI Deep Dives publishes one Deep Dive on a publicly listed company every month. You can find all the 67 Deep Dives here.

Current Portfolio:

Please note that these are NOT my recommendation to buy/sell these securities, but just disclosure from my end so that you can assess potential biases that I may have because of my own personal portfolio holdings. Always consider my write-up my personal investing journal and never forget my objectives, risk tolerance, and constraints may have no resemblance to yours.

My current portfolio is disclosed below: