The Fat-Tailed Economics of AI

Anthropic yesterday disclosed that their run-rate revenue has surpassed $30 Billion (I appreciate that unlike most people in tech, they didn’t call it ARR). To contextualize how mind boggling the growth is, Anthropic ended 2025 with annual run rate of just $9 Billion which then shot to $19 Billion by February. Basically, Anthropic seems to be adding its entire 2025 run-rate revenue every month now!

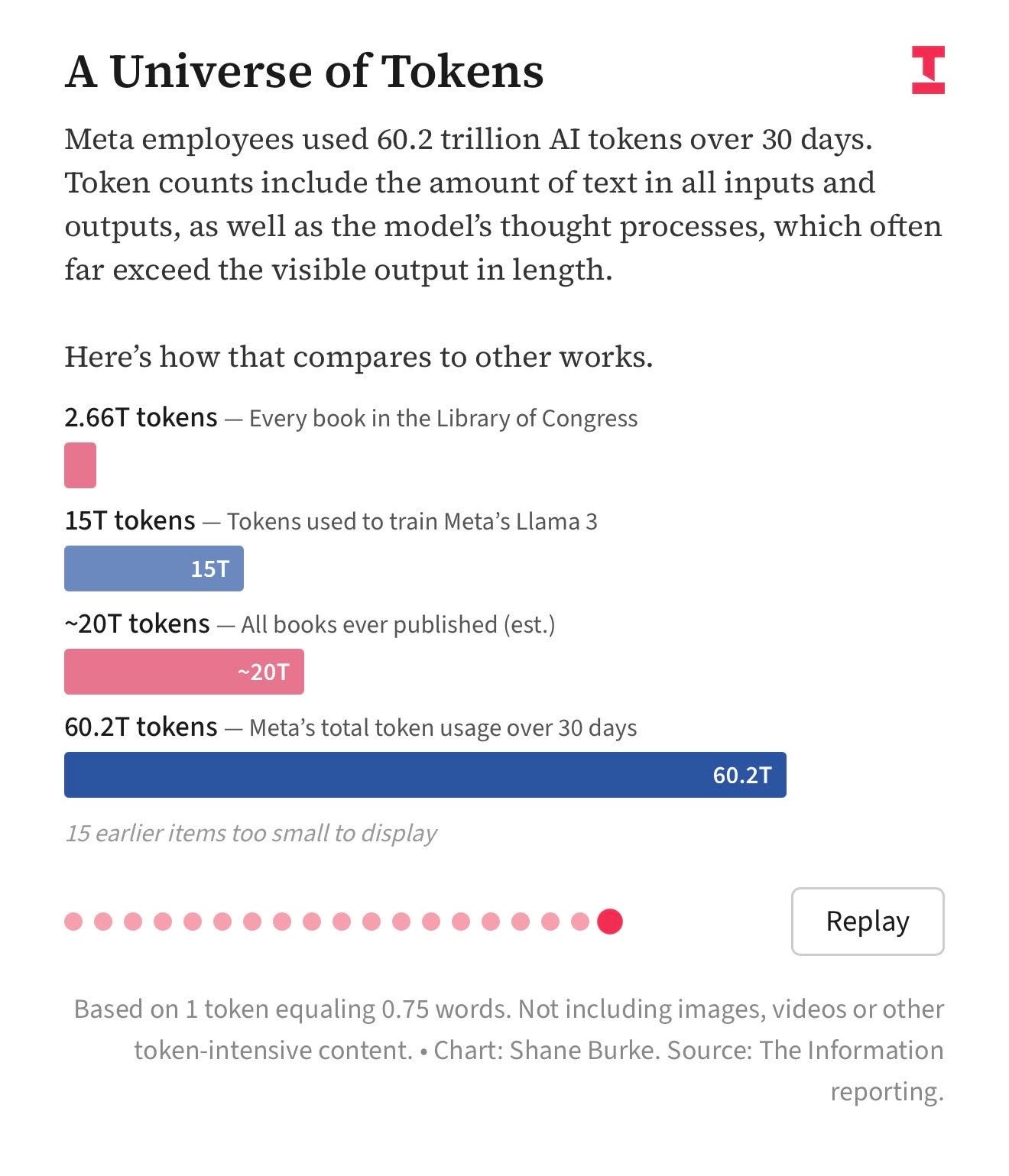

The meteoric growth starts to make some sense when you see how some of its customers are behaving. The Information reported yesterday Meta (likely one of Anthropic’s major customers) is literally running an internal “competition” among its employees to see who can spend the highest number of tokens. Meta employees apparently used 60 trillion tokens in just last 30 days. To understand the scale of such token consumption, The Information helpfully mentioned that all the books that were ever published are only estimated to be worth ~20 trillion tokens! You can probably see now how Anthropic is growing their revenue so fast when its end customers are essentially “bragging” to use as many tokens as possible.

Given how fast Anthropic is growing their revenue and how willing its customers seem to be to spend more tokens, does it mean Anthropic is also making gross profit hand over fist in inference? That may sound like a rhetorical question, but it is actually surprisingly a more difficult question to answer. I have mentioned about AI’s speculative economics before (see here, and here), but I have a newfound appreciation about the uncertainty in AI economics after reading Anjali Shrivastava’s couple of pieces on this topic.

Anjali once left a couple of thoughtful replies to my tweets which made me follow her on X. I then came across her article “A token is not a fixed unit of cost”. Unfortunately, I am one of those guys who always have at least 50 tabs open on his PC and Anjali’s piece ended up in that graveyard. Thankfully, one of her recent tweets showed up on my feed yesterday which reminded me that I never finished reading her piece. After reading her pieces, I can say her pieces should be required reading for anyone interested in understanding AI’s economics.

In the first piece titled “A token is not a fixed unit of cost” (originally published in August 2025), Anjali highlighted how AI is just fundamentally so different from the traditional software cost structure. Traditional software businesses thrive on the “law of large numbers”. The low costs of light users subsidize the high costs of heavy users, resulting in highly predictable, profitable gross margins. AI truly breaks this economic law because its underlying costs are fundamentally non-linear. When an AI generates a response, it must continuously "re-read" the entire preceding conversation to produce the next word. Therefore, generating the 10,000th word requires exponentially more computing power than the 1st word. This creates infinite-variance, "fat-tailed" financial risk: a tiny fraction of power users running complex tasks can rack up massive compute bills that can materially wipe out a good chunk of the gross profits generated by majority of the “regular” users.

I asked Gemini to give me a simple analogy to drive this point home and this is what Gemini came up with:

“Imagine running a taxi company with a flat $10 fare. In a normal business, fuel consumption is predictable. In the AI world, the engine burns 1 gallon of gas for the first mile, 2 gallons for the second, and 100 gallons for the tenth. A short trip is highly profitable; a long trip bankrupts the driver. Standard software pricing assumes fixed fuel efficiency, but AI compute costs compound with every mile.”

Anjali then wrote a follow up piece in January 2026 titled "Why fat-tailed costs emerge at scale". It is quite understandable if you thought “well, even if the cost compounds, AI labs can just fix the problem by simply charging users “per token” (by the mile)”. However, true unit costs are still unpredictable because they depend on the real-time congestion of the entire data center. To be profitable, AI providers must process multiple users on the same physical servers simultaneously (batching). But complex AI tasks devour massive amounts of temporary working memory. If a random spike of users submit long tasks at the exact same millisecond, their combined memory needs multiply rapidly. The system hits a physical “memory wall,” servers slow to a crawl, and efficiency plummets. Thus, the true cost of processing an AI request is a moving target, dictated entirely by the aggregate traffic jam happening on the server at that exact moment.

Again, to make the point even more clear, let me go back to Gemini for an analogy:

“Think of an airline trying to maximize profit by filling every seat. Normally, a passenger's weight is fixed. In the AI airline, passengers' luggage magically expands while the plane is in the air depending on how long their trip is. If too many people with expanding luggage happen to be on the same flight, the plane gets too heavy and stalls. You cannot accurately price a ticket in advance if the plane's maximum capacity fluctuates dynamically during the flight.”

Make no mistake that Anthropic’s exponential growth is downright incredible, but I hope Anjali’s pieces provide an ample food for thought why investors still have lot more work left to do to value an AI lab such as Anthropic beyond looking at the revenue chart. In fact, reading Anjali’s pieces made me re-think Microsoft’s position in the value chain which I will discuss behind the paywall.

In addition to “Daily Dose” (yes, DAILY) like this, MBI Deep Dives publishes one Deep Dive on a publicly listed company every month. You can find all the 67 Deep Dives here.

In her follow up piece, Anjali had a very interesting observation (emphasis mine):